This is the first of a series posts on lessons learned in running large data teams and large-scale data projects in SE Asia. This first post focuses on organization structure, the second on foundational practices, and later posts will talk about way you can improve your own teams as they grow.*

Not too long ago, Data teams were a new, novel thing when suddenly everyone wanted to “do AI” and “Big Data” and no one knew how to hire or what to do. A common approach was to hire a whack of PhDs with quantitative backgrounds, put them off in a corner, and have them do “Data science” expecting magic to somehow happen.

Often, fine pedigrees, but lack of business alignment, led to a lot of time wasted, lack of impact, and a bad taste in companies’ mouths for “data science” hype versus reality.

Alternatively, the unicorn-hire approach of trying to get the all-in-one Jill-of-all-trades, master-of-all approach and then have them suffer from prioritization whiplash as competing groups or some self-appointed “AI visionary” for the organization had them leap from sexy sounding project to project until they burned out, table flipped, and left.

Those were the somewhat dark days of data science, and not as long ago as we’d all like to pretend. “The “me too” days where executives ran around trying to replicate or build teams or infrastructure from stories they’d read on blog posts at company X or heard at conferences from corps and aspiring startups, believing they were falling behind in the “new oil” arms race.

Times are a bit better now, but I am still speaking to lots of companies knowing they need to do something about data, but not knowing how to go about it, what to look for in hires, how to set a vision for their group, or what to put in place to attract talent they may need. Even worse, how to have a framework around measuring success in Data beyond the assurances of the executive in charge or some sexy looking conference talks on flash sounding technologies.

What Data Teams Do

Producing impact is the primary goal of a data science team. Better decisions faster, at scale.

To de-mystify the job of data teams, their job is to uncover the hidden or assumed in your business and apply the Scientific Method to it. This is where the scientist part of Data Scientist comes in (which confuses a lot of people, even data scientists themselves). Much like scientists in other disciplines, good data scientists are in the business of asking hard questions, coming up with hypotheses and then testing those hypotheses with the data and experiments they can run. They come up with and test hypotheses with the organization’s data in the service of the business and build tools to predict or label what their science finds out.

Good data teams are revenue generators, not cost centres. If you have a data team that can not unambiguously point to delivering value well in excess of their overall departmental cost, your data team is under-performing (or alternatively, you need to pay much closer attention to cost containment or cutting.). For example, my last data team overall delivered 6-18X the value of our all-in cost including personnel and depending on which group involved and how you sliced it (and, personally, I believe could and should have been higher than that if not for a lot of corporate hygiene issues.).

Data Teams do this by creating new revenue streams, greatly reducing costs through efficiencies, or optimizing and increasing the speed for decisions and seizing competitive advantages the company did not previously have. In highly competitive markets, data teams are a source of huge competitive advantage and something smart CEOs double down on against less clever incumbents and the 800 lb gorillas in their categories. Shaving a few percentages off of fraud rates, growth of customers service teams, CAC or improving customer retention, purchasing propensity, frequency, or their LTV, ultimately drives a company’s bottom line and success.

I generally bucket what large data teams do into three main groups before you need to specialize by business unit and squads. You can functionally organize from the start and dump an analyst, scientist, and engineer in each business functional group, but without strong governance, leadership, education, and resourcing to handle higher level issues, coordination, and infra you’re going to run into trouble unless you can run project- or product-based data science and have strong BU leaders who have a grasp of the potential of data science and data in general (beyond what they’ve read in a McKinsey report or Forbes article) and can integrate the potential into their engineering, business decision-making, and product management.

Data Teams do 3 things:

- Analytic data scientists generate actionable insights

to guide better business decision making around risks, opportunities, or product features. Good ones focus on the Why and What Should We Do rather than the What Happened (which is more the domain of automated BI). - Production data scientists create models and APIs

which deploy to production to allow other business systems to automate decisions and act on them (eg. segment a user, predict their churn rate or LTV, determine credit worthiness, detect fraud, recommend a product to buy) - Create infrastructure, pipelines, data modelling, and tooling

to streamline producing analytics or model APIs, Make it faster, cheaper, easier, and reproducible.

This is how we organized and attracted talent over a nearly 3 year period in the (very) tight SE Asian talent market for data scientists, machine learning engineers, and data engineers and went from ~50 people to 125+. While there’s no one-size-fit-all way to organize your team and you need to apply common sense on your organization’s strengths and weaknesses to an organizing model, this worked for my last organization in an underperforming, come-from-behind sense to where we had zero data science models into production to where I felt we stood toe-to-toe with the best in the region (I may be biased though ). YMMV on whether this approach works for you, but it should definitely help you think at a higher level about organizational design and strategy.

An Organizing Model

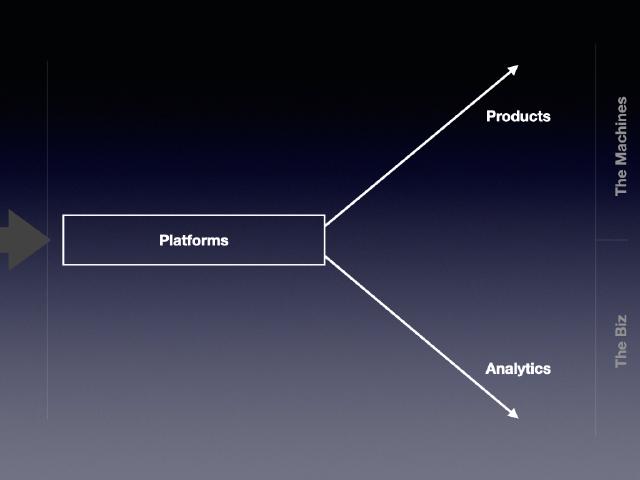

The three groups inform an organizing model. Taking a page from Andy Grove’s classic High Output Management, you can think about a “factory” model of Platforms, Products, and Analytics.

Data is your raw material. It flows in from the left of the diagram from your products, customers, and third party data providers to Platforms.

There it’s collected, corrected, cleaned, combined, enriched, and shaped into useful, easily-queryable and exploitable forms, and exposed to either Data Products, who creates models and apis that interface directly with other systems in the organization (the Machines) or to Analytics, who uses the shaped info to mine for insight and work with business leaders and senior decision makers on direction, strategy, campaigns, and product features (the Biz). You can think about those two groups as Data Science for the Humans and Data Science for the Machines.

From a top level management perspective, your job as the CDO/Veep is to increase the impact, efficiency, outcomes, throughput, and processes that keep the factory humming and delivering products and insights which drive demonstrable business value (profits/savings/competitive advantage) at the lowest possible costs and quickest speed.

Let’s drill down a little on each of the groups:

Platforms

Data platforms are responsible for the technology, systems, pipelines, and decisions surrounding recording, collecting, correcting, combining, enriching, shaping, and exposing data to the company with high availability and discoverability. APis they produce need to be extremely hardy and resilient as well as scaling efficiently and cost effectively to provide data in accessible formats to both Products and Analytics.

Why do you need a separate platforms group?… especially, if the company already has engineering or an IT group?

Engineering concerns surrounding big D “Data” are quite different from those associated with product engineering or corporate IT. There’s a greater emphasis on the data itself and what and how it’s recorded, modelling for efficient exposure and querying, discoverability, pipelines, data structures, efficiency, distributed systems, and streaming than in product engineering. There’s a higher premium set on efficient means of doing things and reproducibility than in one-off features than product engineering. There’s also a higher requirement for understanding large, growing datastores and efficient and immutable ways to move, store, and query data and a fundamental concern with systems that can scale (as well as having data in a accessible, coherent format for people.).

For example, the central data store APIs we used in my last role were built to be hit by every product line the company had so needed to be much more resilient and performant than any singular individual product in a BU as well as having more robust failover and scalability. Providing a consistent, coherent, documented means for product engineers to access those data points in a robust way and get what they wanted out of the system was key (we used a GraphQL interface for standardizing queries amongst diverse data sets.).

Analytics

Data science for Humans.

Good data scientists here were responsible for helping shape decision making, driving good decision-making processes (eg. Experiments) and actionable insights to provide data and coherence in decisions by business unit and organizational leaders. These people were generally combos of business savvy, stats, good ol’ fashioned querying, and presentation skills helping to influence the way the company needed to think about the business to make better decisions. Many started bridging the gap to production data science by building models that were deployable and usable by the business for repeatable, automated decisions making (pricing, segmenting etc.) though fundamentally their role was to help shape and guide the business.

This team ended up working closely with the Products Experiments team (we built out a new platform on top of Facebook’s PlanOut library to allow greater flexibility in our Experiments capability.). This was key in trying to help upgrade the Product Management function in the company from a project management concern focused more on feature execution (output) to a more mature and developed product management capability concerned with outcomes of product features built and better lifecycle management. The move from roll-the-dice attempting of new features to more data-drive experiments that helped move organizational needles was an important, if under-rated, step for the company and an excellent example of how data drives better outcomes both through what gets built and, even more to the case, what it informs us not to build.

It generally makes sense to structure these groups directly along the business and functional unit lines to provide support. Maturity in these areas was moving from doing presentations on what happened each month, to moving to more actionable analyses on what the business could or should be doing (as we used to say, moving from “What happened?” to “Why?”.).

The biggest challenges here are making sure you have sufficient expertise and analytical insight on the team to have your Analysts here being taken seriously and not just being BI reporters or “data waiters” for business unit decision makers. Insight and its consequences should be quantifiable and readily measurable. If you can’t point back at fundamental contributions this team has made in changing the business, you need to re-examine if you have true analysts or project managers.

Products

Data Science for Machines.

Products is the area I would say many people think of when they hear “data science”, though really it’s an integral part of a more balanced and diverse team. The data scientists here create models and APIs which other systems all directly to make decisions (eg. labelling, recommending, predicting etc) for systems like production business unit apps, functional units (eg. marketing CAC/CLV, fraud models for payments/credit), or even other systems in the Data team itself (pipelines that call a model needed to augment or check/clean user data, for example.).

Besides data scientists pounding away in Jupyter notebooks, you also tend to have your machine learning engineers working here, in order to make sure that models built are scalable and highly efficient and resilient in production. Increasingly, we had moved to a model where we wanted to be able to get data scientists just to be bale to have any API for a model they built just run in production versus Kubeflow or the deployment framework we’d created (Raring Meerkat), but when a model is not as performance or as as efficient as it should be, or you need to redevelop a Python model in something like GoLang for performance reasons, or there are scalability or efficiency issues, this is where your machine learning engineers come in. They were also really keen on building frameworks and process tooling in order to make the time between development to getting a model into production as short as possible.

Quite often these engineers will also be building pipelines from the main Platform stores to create features that the data scientists have come up with as part of feature engineering and make sure those are available in a timely manner for at-time prediction.

Alternative Organizing Model

An alternative to this model is squads/tribes aligned along each business unit. Or, basically have a mini-data team in each business unit or concern your company has. This can improve speed but increases tech debt, cost, and headcount. It can also work and does benefit from being closer to the business, but I feel has a few drawbacks (even though we had at least one team we ran in that manner):

- Duplication

- Expertise (esp advanced expertise) gaps between teams

- Leadership

- Governance

It’s more difficult in groups where a unit head’s understanding of data and data science is wanting, aspirational, or driven by media articles. It can lead to seriously bad side effects in an organization with weak evidence-backed decision making or poor product-management discipline. Ultimately, you do eventually want to be able to grow the organization to a squad model (or a federated) kind of model once you’ve got maturity, expertise, education, and governance processes in place, but leaping to this model too early can be a liability strategically (and ultimately, you are choosing between a “lifting all boats” strategy versus a “bright spots of promise, but messy all over” strategy going this way too early.).

How to Make yourself Attractive to Talent

Talent availability is generally your limiting factor in building out a team and you’re not one of the FAANGBAT. Your people are the gas that makes your car go vroom. Without topping up the tank as you grow, you are going to stall.

Why should people come and work with you and your company? Remember, it’s your job as the person leading this team to create a conducive environment to attract and grow those people. You cannot leave this up to your People Ops group or your PR group but need to work in conjunction with them to set out your strategy and objectives and have them support and execute on that strategy.

Painting in broad brush strokes, you need to have a two-pronged approach to making yourself attractive to potential team members.

Mid to junior talent will want to know they can learn from the people on the team and enhance their skills and future employment prospects. They also want to know that the team is well-run, logically organized, and there will be low friction for getting things done, as well as clear guidelines for their progression and recognition.

Seniors, particularly those you need to attract to leverage their capability to also hire, generally want to progress their careers to the next level. They generally want to move from a functional to managerial role and want to understand how to start managing managers (since this is where most senior lead’s careers stall), how to build their own personal brand, build out teams, manage higher level strategic functions for a group, interact with high level stakeholders in setting direction for the org, and own a functional domain.

Tall order, but there some key foundational items you can have in place to make sure that you are more attractive and pass muster from a sanity check viewpoint in the eyes of most candidates. I’d break the key ones down for us into this handful:

- A coherent and logical organizing model for the team (see above 😜)

- A career ladder tied to skills

- A coherent interviewing, evaluation, and progression process

- A data science process and deployment infrastructure

- A clear and unambiguous strategy

We’ll talk about those and how to build them if you don’t have them in the next post in the series.